Well, the good news is that nobody has to listen to my constant debating whether I should get a Vision Pro headset anymore. The bad news, of course, is that now I’m going to be constantly talking about what it’s like to use the Vision Pro headset.

By the time I’d ordered one, it wasn’t scheduled to arrive until the end of February. But as early as the day after launch, I heard that there were plenty of opportunities to make a same-day order, or even to just walk in and buy one. I’m not sure whether that means demand for the device was overstated, or whether Apple had anticipated the rush of early adopters, and I don’t think that it actually matters that much. I doubt that anyone realistically expected this to be flying off the shelves, and anyone outside of Apple who declares this a flop or a hit within the first year and a half (at the earliest) is being foolish.

So far I’ve only used it for about 10 or so hours, and only half of that with the correct lenses (see below). And my early impressions (spoiler) don’t differ all that much from the non-Verge reviews that I’ve seen so far. The stuff that it does well is amazing, it’s easy to imagine1And, obviously, probably much harder to implement all the ways that future versions are going to improve on it, and it really does feel like the start of a new platform, instead of just a failed experiment. With the emphasis on start of a new platform; it’s still absurd to call this a “developer kit,” but it’s also not yet something that will be useful to more than a fairly niche audience.

For context, if you’re stumbling onto this post somehow: I’ve used the Oculus Rift 2, the HTC Vive, the HTC Vive Focus, PSVR, the Quest, and the Quest 2. And I’ve worked on a couple of VR and AR projects. This is my “first few hours impressions” post. (There are many like it, but this one is mine).

Demos and Pick-up

I had a surprising amount of anxiety about the whole process of getting a demo and fitting in store, which was a big part of why I was (initially) content to wait for it to be delivered. It turned out that I needn’t have worried, since the whole process was painless until I got home and saw the charge to my credit card. I keep forgetting that Apple, like Disney, is masterful at making it easy to give them money.

Based on the controlled demos that journalists and YouTubers have been getting since WWDC, I’d imagined that the demos would be conducted in private, tastefully-decorated showrooms hidden behind the big mysterious wall at the back of every Apple Store. But now that the Vision Pro has gone public, there’s no need for secrecy or discretion, and I imagine that Apple even wants to keep it public, to help normalize the sight of people wearing the headset.

When I went to pick mine up, they sat me down at a stool and brought out a demo unit for a test fit with the size of light seal I’d been assigned earlier by the app. There was a machine in the demo area that reads prescriptions from eyeglasses, so they took mine for a minute to get the measurements and find some lenses with a close-enough prescription. I tried it on, confirmed that it fit comfortably, and then I saw the giant “hello” floating in the middle of the Glendale Galleria. After that, I waited for a bit while they pulled up my order from the back room, and I chatted with the store rep who was helping me about his impressions of the headset. He offered a full demo, but I declined, and there was no pressure for any of it. I could’ve opted out of the fit test, even.

Because of the cost and the mystique around the Vision Pro, I’d expected there to be some kind of cult-like welcoming ceremony associated with the whole process. Remember those ads they used to show for Saturn cars, with a bunch of sales people standing in a semicircle and applauding as a young driver got their keys? There was, thankfully, nothing like that. There was an extended moment where every Apple employee in the store began clapping, and they kept it up for several minutes, but that was just a very sweet send-off for a colleague’s last day, and had nothing to do with anyone buying a VR headset.

Comfort and Getting Started

It took a couple of days for my prescription lenses to arrive, so I first used it without them. I’d been concerned that since Apple was so adamant about requiring custom lenses, it’d be unusable without them. The reality, probably unsurprisingly, is that everything works, and it just looks like it does when I look around the room without my glasses on.

In retrospect, I’m wondering whether the dramatically this is magical! reaction I had when first putting on the headset last year was just due to the fact that I didn’t have to put my glasses on. When I was working on a VR project, I was having to put on and take off the headset dozens of times a day, and it was a constant hassle having to wear one over eyeglasses. Most annoying was that the headset would take my glasses with it every single time I took it off.

So I suspect that using glasses inside the Vision Pro would cause problems with the eye tracking. And requiring more room in the light seal would further reduce the field of view, which already seems a bit smaller than other current VR headsets. And there are various other issues that come from using glasses inside an HMD, like getting weird glare and reflections, scratching the lenses, and having to make the headset large enough to accommodate them.

But I suspect that the reason Apple decided it’d be all right to mandate lens inserts is simply because it’s a better experience. People are already going to be paying a premium, even in later revisions after the price goes down, and there are so many benefits to using lenses that it’s worth the cost.

I’ve read the complaints about the weight, and specifically the weight distribution, of the Vision Pro, and I still say it’s the most comfortable VR headset I’ve worn. (The PSVR is probably second, and I had a lot more issues with light leaking and getting a good fit). I’m not claiming that this is a completely casual experience, or that you forget you’re wearing it, but I have already been able to wear it for longer stretches than are comfortable in other headsets. In fact, my biggest problem with it isn’t the weight or the pressure against my face, but just the fact that my eyes water a lot whenever I’m looking at any light source.

I’ve also read the weird claim that included “dual loop” strap is universally understood to be a better and more comfortable fit, and the inferior “solo knit” strap was made default by Apple solely because it looks cooler. I say that’s not just bull; it doesn’t even make sense. When was the last time Apple included any extra accessories they didn’t need to? I’ve tried both straps, and not only is the “default” one easier to put on and take off, but I think it’s a lot more comfortable in my case. Weird, reality-distortion-field theory, I know, but maybe Apple included two straps because people have different heads, and find different things comfortable?

Passthrough

One of the most surprising things about the Vision Pro announcement was that it was a VR headset being used to do AR. Other VR headsets have passthrough cameras of varying fidelity, and other AR headsets have projected virtual images onto a “real” display with varying levels of success. I don’t think Apple’s approach is just a holdover until technology advances to the point where they can ditch the screens; I think it’s the basis of the entire platform.

I haven’t yet used the Quest 3, but I’ve seen videos of its passthrough/mixed-reality mode. While it seems miles ahead of the Quest 2, it’s still not anywhere near the level of what Apple’s done. I’ve seen a couple of reviews that suggest that the Vision Pro’s passthrough system is good but not that much better than what’s available in other, cheaper headsets, which is a claim so baffling as to be unbelievable. Responsiveness is near-instantaneous, there’s almost no warping, phones and screens are (mostly) readable.

That’s not to say it’s perfect; they’re still cameras after all. If you move your head quickly, you’ll see motion blur. If there’s not enough light in the room, it looks more like bad video with the ISO rate turned up too high. Adjusting your gaze from a bright light to a dimmer one will result in some obvious flicker. It’s astounding that it works as well as it does, but they’re still cameras.

Everyone seems to be assuming that the Vision Pro is just an interim solution until Apple can do what it really wants to do, which is obviously make a real AR headset that projects digital images over your real view. Last year, I was convinced that this was false. After all, they’ve already done the impossibly hard work to achieve an excellent passthrough experience in a VR headset, so this could be the best of both worlds: overlays onto your reality, or completely immersive experiences replacing your reality, all in the same device.

Now, I’m not as convinced. Not just because it seems like transparent screens are getting better, making the potential of having “true” passthrough more feasible within a decade. The bigger problem still seems to be not just the light coming through the lenses, but from everywhere else. How do you have the “normal-looking” glasses that everyone seems to want, and still block out enough light to deliver a completely immersive experience?

But mainly, I’m not convinced that it even matters in terms of what Apple’s ultimately trying to do. The technology used to show you your surroundings isn’t as important as making sure you always start by seeing them, and you always have a way to get back to them. The Vision Pro has inconceivably complicated technology, including an entire second system-on-a-chip, devoted to making sure that you don’t start out in a black void, fumbling around for a controller, or setting up a “safe area.”

Instead, one second you’re in your room, and the next you’re in a slightly darker version of your room, with a big cursive “hello” hovering in view. So much of the work that went into this headset was dedicated to making the jump from “outside” to “inside” as seamless as possible.

Looking and Pinching

Which extends to the input. There’s no fumbling around in the dark for controllers if there’s no controllers!

The eye tracking and hand tracking/gesture recognition in the Vision Pro are astoundingly good, even if they’re not quite as magical as my first impression was. When you’re just interacting with the home screen, it’s almost uncanny as it seems to be reading your mind. But the more you add windows with different displays and varying sizes of buttons and switches, the more opportunities you have to find flaws.

It doesn’t work quite as well as the lights get dimmer, for one thing. Eye tracking seems to be mostly unaffected by low light, but gesture recognition can get noticeably worse. I’ve also had some issues with scrolling in general; looking and pinching works so well that it almost immediately feels natural, but I have yet to have a great experience scrolling through long lists of things without its feeling like Minority Report, or that I’ve got my Mastodon feed stuck to my fingers and can’t quite get it off.

All of that feels like it will improve as the operating system matures, and as developers settle on conventions that are better suited to it, instead of just directly translating touchscreen UIs.

The issue that’s potentially a bigger problem is that it doesn’t allow for a disconnect between what you’re currently looking at, and what you’re currently interacting with.2The closest comparison I can think of is whenever I tried to use an X11 system, where I could never quite get the concept of your input being locked to the window currently under your mouse cursor, instead of having to click on a window to give it focus. I never noticed before how much I tend to glance away from a window while I still typing or clicking on something, or how much I look ahead in a list while I’m scrolling through it. I still don’t have a good notion of whether the Vision Pro’s approach is something that will quickly feel completely natural, or will require an even more dramatic rethinking of how UIs work.

Apple Details

When I first tried the Vision Pro, my overwhelming reaction was, “Ah, Apple waited until they could get everything right.” And I still think that’s the case, although I’d change “everything” to “most of it.”

So much of it seems to be about reducing the “friction” of using a headset computer, to make it as seamless and intuitive as possible with early-2020s technology. It makes it feel as if everything else was rushed to market. Which isn’t necessarily a knock on other consumer headsets3I think the Quest 2 is really good, it’s just not good at the stuff that Mark Zuckerberg wants it to be good at, as much as the fact that Apple’s in a position to give products enough lead time, and to have enough traction that it can insist on its own particular idea of how a “spatial computing” platform should function.

Part of that is just taking advantage of its mostly-closed ecosystem, where they control the hardware and most of the software. That means you connect the Vision Pro to your Apple ID, and you’ve got immediate(-ish) access to everything on your iPhone or iPad. You’ve got any movies you bought over Apple TV, except now they’re in 3D. To start screen-mirroring with your Mac laptop, you can just look at it and tap “Connect.”

But there are lots of strictly-unnecessary flourishes to it as well. My favorite is the subtle shadow that appears under each window, to help place it in space. It either casts onto the floor or, remarkably, onto a desk or table, without your having to explicitly map out your room. It really helps sell the notion that these are placed inside your physical space.4After my first time using the headset, I was convinced that they were doing really elaborate real-world light estimation on all the UI elements. Now I honestly can’t tell whether they’re doing any light estimation, or it’s just that the simple shadows are so convincing.

There’s also the simple fact that Apple’s one of the only companies making operating systems that still conforms to a set of consistent human interface guidelines. Most things in visionOS have a consistent look to them, and most of them behave predictably. It’s a sharp contrast to the Quest, which has been gradually refining and improving the look of its front end, but then all bets are off as soon as you launch an app.

Personas (Non Gratas)

Believe me, I really wanted to make my own creepy “persona” on the Vision Pro, for the big laughs that would inevitably result in trying to translate my Cryptkeeper-like appearance to a Lawnmower Man-like virtual avatar. But I’ve been foiled at every attempt.

The Vision Pro just plain doesn’t like my face.5Which is another sign that is an advanced device of refined taste. Every time I try to go through the process of making a “persona” — which involves taking off the headset and looking at it while a voice gives you Simon Says-like instructions for turning your head and making faces — it enters into a constant loop of contradictory instructions, as it tries to make sense of what it’s seeing. It’s especially frustrating/comical since the instructions have to use the approved product name every time, so it’s Move the Vision Pro a little closer. Move the Vision Pro a little farther away. Move the Vision Pro a little closer. Hold the Vision Pro higher. Tilt your head down slightly. Tilt your head up. Take off your glasses. Move the Vision Pro a little closer. Make a neutral expression. Move the Vision Pro farther away.

If I had to guess, I’d bet that the thing is having trouble with the combination of old age + hair. I’ve seen other beardos do reasonable avatars, so it might just object to mine being bright white.6Hey, you and me both, Vision Pro. And I suspect that my largely-genetic under-eye bags make it look like I’m always wearing glasses.7The hitchhiking ghosts at the end of Walt Disney World’s Haunted Mansion have the same issue, for what it’s worth: the effects never seem to understand where my face is on account of my beard.

I’m hoping that a future OS update will make it possible, so I can at least get an uncanny valley version of me working before the feature is either improved to the point where it’s no longer funny, or it’s scrapped altogether.

Photos, Videos, and Panoramas

I’ve been taking “spatial” photos and videos with my phone in preparation for getting a device that could actually view them in 3D. So far, I haven’t been blown away by the results. Generally, you need to have the right amount of light, be the right distance away, and keep the camera stationary to get the best effect. I’m still looking forward to experimenting with it some more.

What has been surprisingly effective are the panoramas. I’ve been taking them for years, any time I found myself in a scenic vista, but it’s never seemed worth the effort. But looking at them in the Vision Pro makes them finally worth the effort. There’s no 3D effect, but it does wrap the panorama around you, making everything life-size and surrounding you with the view. It’s remarkable to see photos from as far back as 2016 being re-presented as if they were new.

And your videos don’t need to be 3D to benefit from being blown up. I watched a video that I’d taken a couple years ago of the Disneyland marching band playing on Main Street, and it actually made me a little bit emotional. I’m used to recording stuff “just in case,” even though there’s rarely a chance to re-watch any of it. Having the chance to watch them again, blown up to full size, has me eager to go back through my older videos.

Landspeeder Drive-In

As for the real, professionally-produced immersive videos: they’re really good. If you’ve seen 360 videos from YouTube or elsewhere via a VR headset, then the Vision Pro’s likely won’t be so novel as to be overwhelming. But the increased resolution, professional lighting and cinematography, and greatly improved displays all take them to the next level.

I’ve seen two of Apple’s so far. One featured a woman walking barefoot across a highline stretched across a chasm against a sheer cliff face, and it was harrowing, and I had to remind myself how well-made and beautifully shot it was so that I didn’t just immediately nope out of it. As unsettling as that was, though, for me it wasn’t as viscerally upsetting as the video of a recording session in Alicia Keys’s studio. No offense to her intended; it’s just that the video is shot to look as if you’re seated inside the studio, just feet away from her, and she frequently sings directly to the cameras, and it made me intensely uncomfortable. There’s a reason introverts don’t buy front-row tickets to concerts.

I was already looking forward to the Disney+ app, as one of the things that made the Vision Pro seem directly targeted at me. It did not disappoint. The image they used from the teaser video at WWDC showed someone watching The Mandalorian from inside Luke’s landspeeder, and I was pretty much doomed to buy one just from that.

After seeing it in person, I frankly think they undersold it. The environment really is set up like a drive-in theater for landspeeders, all parked in the desert just outside Mos Eisley, with a Jawa sandcrawler nearby. As the movie starts, the lighting shifts from day to night, and you can see the lights from the other vehicles and, if you turn around, the dim lights of the city buildings behind you. A particularly wonderful touch: after the movie plays for a minute or two, the lighting slowly gets brighter, as if your eyes are adjusting to the darkness.

I love it not just for the effect itself, but for the whole idea behind it. It’s exactly the right tone of fantastic-but-also-kind-of-silly that makes me go apeshit over Star Wars.

There’s no denying it: watching media is absolutely the best part of the Vision Pro in its current incarnation. Back when the iPad was first released, I was reluctant to acknowledge that it was best at watching videos and reading comic books, because I really wanted it to have a future as a general computing platform instead of just being yet another screen to watch movies. As the iPad has matured, it’s found more ideal use cases, especially art creation. I suspect that over time, it might not become a completely general-purpose computer, but we will see some specialized uses for it where it excels. And it will remain the best platform for watching 3D movies.

Wall-Sized Monitor

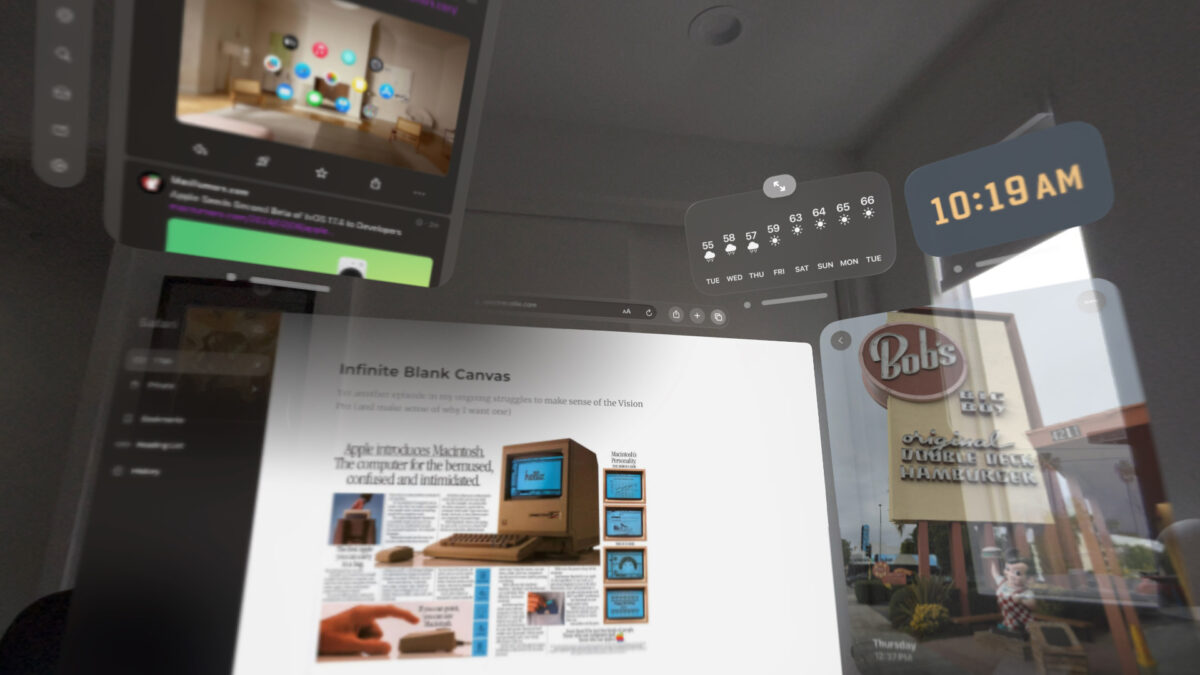

I’d imagined that being able to mirror my Mac’s display, effectively giving me a wall-sized monitor for my laptop, would be the “practical” feature that justified early adoption of the Vision Pro as more than just an expensive toy.

The reality is that it’s very impressive, but it’s not going to be my first choice in my home office. I’ve got a 27″ monitor that I bought when I started working from home, and it’s just more comfortable to use it. As good as the screen mirroring is — and so far it’s been as seamless as I could imagine its being — it’s still screen mirroring, which means a slight degradation of image quality. I did an experiment of putting Safari running full-screen on my Mac against the native version of Safari running in the Vision Pro, and the native version is, completely unsurprisingly, much sharper.

None of that is a surprise. Native apps are always going to look better and perform better than ones running on the Mac. Both because the display is getting mirrored, and because the whole visionOS UX is being mapped to an operating system that wasn’t designed for eye detection and gesture recognition. I’m hoping that more apps are given native visionOS ports, so that we’ll gradually be able to do more on the headset itself and have to rely on the Mac only for stuff that just can’t be run elsewhere.

It’s interesting that the first company to put out a full suite of productivity apps for the platform is Microsoft. I’m not convinced I’d rather use Word in a headset vs just typing on my monitor, but if you’re going all-in on a distraction-free environment, I can kind of see the appeal of writing the Great American Novel from the middle of a national park.

And I’ve said it countless times before, but I can’t repeat it enough: I hope Apple finally relents on all the restrictions on running Xcode and other development environments on other operating systems, so we’re not limited to the Mac for software development.

So far, I’ve used the screen-mirroring from the Mac to the Vision Pro to run Panic’s Nova and the Playdate simulator. Is that my preferred development environment? Nah. But I made the joke that I needed a state-of-the-art, expensive VR headset to do script programming for a 1-bit game platform, so I had to at least try it.

VR Games

I already complained that Apple had no interest in advancing the state of VR games, even though the hardware itself is more than capable of it, and that complaint still stands. But it makes more sense now, after using it for a while. Part of what makes the Vision Pro feel as frictionless as possible for 2024 technology is that it can be run while seated, just using your hands. It doesn’t demand you have controllers, and it doesn’t make you map out a safe space. It will happily drop out of immersive mode whenever it detects you’re getting too close to something, or if someone is entering your field of view.8Or a cat. One of the most pleasant surprises so far was when I was sitting on my couch at the top of a mountain in Hawaii, happily typing away at my laptop, when suddenly the ghostly face of my cat broke through the clouds, demanding pets.

The only AR game that I’ve tried so far on the headset is the “game room” app that’s included with Apple Arcade. I tried playing its version of Yahtzee, and it felt like an early release on a new platform — kind of clunky, interacting with the pieces directly doesn’t add much of anything. I’m hoping that the Quest 3’s embrace of “mixed reality” games will help make it feasible for developers to target both platforms. There’s a Lego game that looks intriguing, but I haven’t tried it yet.

One thing that I hadn’t expected: using the Vision Pro for a while has given me a greater appreciation for the Quest 3. It seems like a lot of people — including the owners of the Quest 3 platform — think that it’s unambitious or demeaning to describe it as a gaming platform. I don’t think so at all. I’ve got a Mac, and I’ve got a PS5, and I think the relationship between the Vision Pro and a Quest headset could be exactly the same. It’s really, really good for room-scale VR games running natively, and it works surprisingly well running VR games off my Steam library on a now-aging Windows machine. Once my credit card recovers from the thrashing I’ve just given it, I’m actually inclined to pick up a Quest 3 as well. (I still have to finish Alyx, after all).

A Few Highlights

With the caveat that I haven’t had it long enough to try out very much, here are a few highlights:

- I bought the Widgetsmith app a while ago to support the developer, but I honestly haven’t had much need for it on iOS or iPad OS. It’s very useful on the Vision Pro, though, letting you put up a clock in the corner of the room, or the weather just above a window. It’s easy to lose track of time otherwise.

- Sky Guide is already a great app for stargazing using the iPhone, but the visionOS version lets you turn your room into a huge planetarium. As it stands now, the main interaction is the ability to see constellations fade into view as you look around, reach up and pull them out of the sky, and get a full description of them. I’m hoping a future version lets you point at celestial objects for more info, since I’m most often using the app to ask “what star is this?”

- Valve’s Steam Link can run its iPad version inside a window, which is a pretty good option for people with a huge library of 2D games who really want to play them in the middle of Yosemite. I haven’t tried this one past verifying that it works, because I haven’t yet linked a game controller to the Vision Pro.

I haven’t seen anything yet that would make this version of the device an unqualified “must have.” Even the media apps, which deliver the best experience of watching a 3D movie that I’ve ever seen, aren’t enough to make it a “must have.” I think it’s been pretty clear that buying into the Vision Pro in its current incarnation is for people who are excited about the potential of it, more than what’s available on day one.

Mac Plus+

I’ve been reading and watching a ton of reviews and tepid takes on the Vision Pro for a while, and it’s settled into a feedback loop. Some observations are repeated as if they were universal truths, even though they don’t apply to everybody (I think it’s as comfortable as any other headset I’ve used), or are simply incorrect (it’s absurd to say that you can’t share what you’re seeing with someone else, when you can broadcast your display to any device running AirPlay). There’s a real I was promised magic! feeling to the reviews, and to me it says more about the current state of tech journalism than the actual product they’re supposed to be reviewing.

If you suspect that you might want to get this device, or even if you have no intention to buy it but just want to see what all the fuss is about, schedule a demo at the Apple Store. They make it a lot easier than I’d expected, and 30 minutes will give you a great idea of what it’s like. I don’t believe it’s the kind of thing where your opinion of it will gradually change over the course of several weeks. It’s either going to convince you I must have this, or it’ll convince you that you don’t mind waiting for a future version that’s better, faster, and cheaper.

Ultimately, I think that the best comparison isn’t really “an iPad for your face,” as I’d been assuming. I that the Vision Pro in 2024 is actually most like the first Macintosh in 1984. I mentioned that to a coworker, and he said “or the Newton.” They’re similar in that I also wanted a Newton very much, but could never afford one. But different in that I could immediately think of a bunch of useful things that the Newton could do.

Like the Mac, the Vision Pro does much of the same stuff as other, similar computers — VR and mixed-reality headsets, in this case — but it goes all-in on a specific idea of presentation and how people will interact with their computers. It’s very expensive, and it hasn’t yet established what exactly it can do that no other device can do. So its appeal isn’t necessarily in its functionality, but its whole “look and feel.”

The Vision Pro isn’t as dramatic a change as the first Mac was; its UI is still heavily influenced by the iPad, to keep everything familiar. I bet that the platforms will diverge over time, as visionOS builds up more of an app library, and developers get better at distinguishing what works really well with eye tracking and hand gestures vs. multitouch.9Bringing long-presses over from iOS and iPadOS just feels wrong to me, for instance. That would be the perfect opportunity to introduce a second gesture besides pinching, to take the place of the right mouse button and the long press.

Back when the device and the platform were more theoretical, instead of an actually shipping product, I wasn’t sure whether it had any future beyond the initial novelty. I really like it so far, but it was far from certain that enough other people would find enough value in it — and get over short-sighted objections to it — that it could be sustainable.

That still may be the case; Apple can’t force people to buy things they don’t want.10Yet. But after seeing the launch and trying out the first iteration, I get a strong sense that Apple’s got enough confidence in it as a platform that it’s ready to be patient and let it find its audience. It’s hard to think of it as anything but extremes — either they’re selling VR hardware and hoping developers will be able to figure out what it’s actually useful for, or they’re delivering a fully-realized vision of the future of computing, and all of the rough edges, or holes in the software library, are proof that VR and AR are doomed to be little more than novelty.

The reality is somewhere in the middle. They’ve built some amazing technology, they have strong ideas about what “normal” people’s experiences in VR should be like, and they’ve given developers the most complete set of tools available11Minus game development, perhaps. to translate their ideas to VR and AR. I think there’s a ton of potential here, it’s broken through my disappointment that VR hasn’t yet had the huge impact that I’d hoped it would have, and it’s made me as excited as I was when I first tried Valve’s demos on the HTC Vive. And while I’m waiting for the future to happen, I’ll be watching 3D Disney movies from inside a landspeeder.